A path to AI impact

Authors

Authors

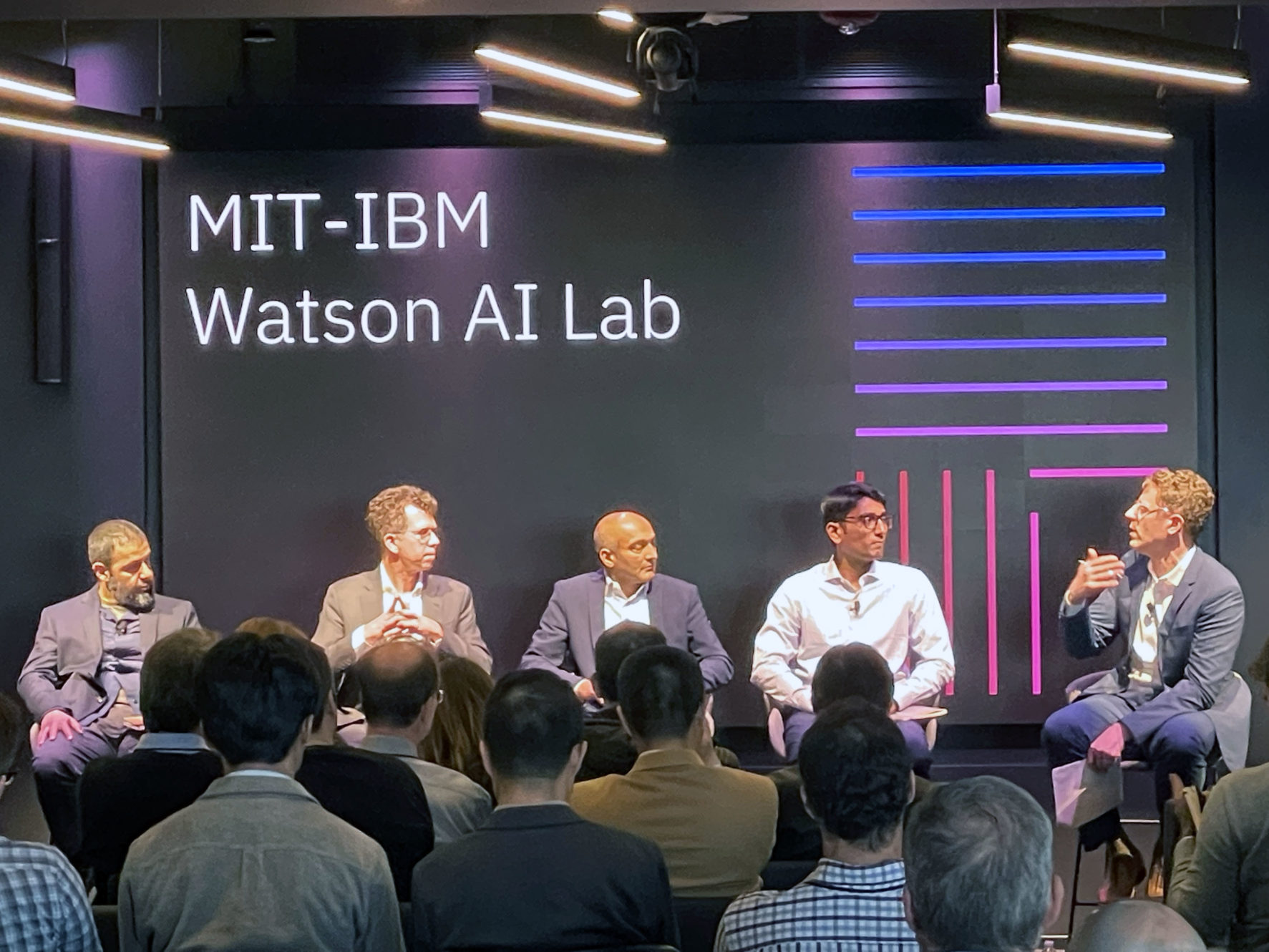

The MIT-IBM Watson AI Lab propels research in the field of AI/machine learning and computing from theory to practice at their annual Industry Showcase event.

In May, the MIT-IBM Watson AI Lab opened its doors to researchers from both institutions, as well as its member companies, to discuss the shared goal of translating AI research to impact for business and society.

Over the course of the day, Industry Showcase executives, attendees and Lab collaborators concentrated on a key theme: deployment. As part of member-specific benefits, attendees with technical backgrounds spent the first half of the day meeting with research teams for tailored discussions and assessments of ongoing projects, including educational overviews of an AI topic related to their work, progress updates, technical requirements, and scoping, followed by a guided tour of MIT’s Computer Science and Artificial Intelligence Lab (CSAIL). Executives met with the Lab’s co-directors, Aude Oliva of MIT and David Cox of IBM Research, who provided headlights into the future of AI and the Lab’s role in this picture, including an overview of their research strategy, research portfolio, and an update on its interfacing with MIT’s Schwarzman College of Computing.

“Incorporation of machine learning into a business strategy is no longer an option — whole industries are witnessing revolutions to, not only automate, but to gain an edge with the technology,” says Aude Oliva, MIT director of the MIT–IBM Watson AI Lab and director of strategic industry engagement in the MIT Schwarzman College of Computing. “Through corporation membership, including consultation, steering meetings, and research exploration, the Lab is helping to open doors and discover new ones in an efficient and effective manner. Our project portfolio for the upcoming year will build on this, looking into accelerating impact for many industry use-cases ranging from AI models discovering chemicals and materials aligned with our planet’s wellness, to optimization algorithms that smooth supply chain management.”

Translating AI research into business impact

While machine learning, particularly deep learning, is a powerful approach to analyzing logistical data, for example, to forecast demand, the costs may not justify the added benefit. During the showcase’s keynote talk, economist Neil Thompson — a researcher with the Lab, MIT’s Computer Science and Artificial Intelligence Lab (CSAIL), and MIT’s Initiative on the Digital Economy — shared case studies from his group examining and modeling financial, compute, data, and human infrastructure needed for effective implementation and the projected returns on this investment. Through his client interviews, Thompson reported that 60% of the organizations said that deep learning was their most important method of analysis. Many businesses use it for automation of tasks that pertain to vision and language, that have traditionally been difficult for computing. The second piece, he noted, is that many companies are considering the cost of deployment to achieve new goals, requiring building a specialized system. For example, producing a truly novel program like AlphaGo, which was the first machine to beat a professional Go player, Thompson argues, may be worth the $35 million ticket price, demonstrating new abilities and providing cutting-edge insights. However, using deep learning to forecast supermarket item demand may increase prediction capabilities by a third, reducing spoilage and not overstocking shelf-safe food, but at a cost that isn’t feasible. Knowing that there was some balance that could be struck, Thompson and his team asked, “is there something predictable about the way deep learning solves these kinds of problems, at a sort of statistical level, that will allow us to do some prediction about how much these things [implementation] might actually cost?” To address this, they developed a model calculating how much computing power (in addition to greenhouse gas production and computing training) would be required to achieve a particular level of error in predictions, giving businesses a way to estimate costs before sinking money into a system that will later be abandoned. “So actually … the most important cost that you care about [computation], we can actually do an estimate of how much these things are going to cost,” said Thompson. They also considered similar analyses for jewelry stores in India, as well as radiology labs, cases where, respectively, you have little inventory and want novel designs, and where the high cost of computing might justify lower error rates and detect cancer early. So, “there is this interesting thing that the statistical properties of deep learning allow us to make predictions with reasonable accuracy, about how big your systems are going to have to be and therefore about the cost.”

Following on, Thompson inquired about considerations and personal insights IBM Research, Nexplore, Wells Fargo, and Boston Scientific executives, who have implemented deep learning within their teams and are working with the Lab to improve their systems, capabilities, and capacities. The moderated panel discussion teased apart sector-specific issues, the evolution of solutions, and trade-offs they are making as they employ machine learning and consult with the Lab on matters ranging from training and data and resource management to high-level buy-in and risk management.

“We started out in the partnership with IBM and MIT because we set out some work with IBM research in neuro-space and from there, learning more about understanding of AI, and how do you really promote that in the organization and really make it part of the DNA,” said Maulik Nanavaty of Boston Scientific. “And we got started a few years ago, as part of a project, and it has been a great experience and a great journey as we go forward.”

Discovery and exploration

This work with the Lab and IBM Research is made possible by an era of “accelerated discovery,” Dario Gil, IBM senior vice president, director of IBM Research, and IBM Lab chair, shared during a short film and panel discussion on its significance and real-world implications. “In the moment we find ourselves is [at] the convergence of not just one technology, but three technologies that are going to alter how we practice science and how we develop technology. I like to summarize them as bits, plus neurons, plus qubits.” This revolution brings together classical computation, artificial intelligence inspired by biological brains, with physics and information in the hybrid cloud and quantum computing. “Our objective is to accelerate the rate of discovery by a factor of 10x,” said Gil. “So, we want something that would have taken 10 years to do in one year, or something that cost $100 million to do in $10 million.” Leveraged in conjunction with the process of discovery, the Lab is endeavoring to solve some of the toughest problems of today, running the gamut in from drug discovery and combatting climate change, to food production, creation of new computing and sustainable materials, and socioeconomic equality.

“The MIT-IBM Watson AI Lab is committed to AI research towards real world impact,” says Kate Soule, the Lab’s membership program lead. “By partnering with member companies, we ensure our new AI technologies solve the biggest challenges faced by industry today.”

The demo showcase in the afternoon highlighted the variety and impact of research (and their applications) coming out of the Lab. Stations arranged around the Lab, crafted by IBM Research’s UX engineers Lucy Yip and Ja Young Lee in collaboration with Lab researchers, allowed attendees to explore Lab work that’s being done in collaboration with member companies and researchers in areas of accelerated discovery, virtual reality and simulations, computer vision, data-driven decision making, natural language processing, AI safety and trusted AI, and robotics.

“The MIT-IBM Watson AI Lab is a unique academic-industrial collaboration that brings together cutting edge AI research with real-world problems faced by businesses,” says David Cox, IBM director the MIT-IBM Watson AI Lab. “It’s been exciting to see MIT, IBM, and our MIT-IBM member companies coming together in a whole that is much larger than the sum of its parts. Thanks to the strength of this ecosystem, we believe that we have the opportunity to define a new gold standard for driving research into business impact.”